Pinecone

Managed vector database purpose-built for AI. Fast similarity search for RAG agents.

Pinecone is a managed vector database built specifically for AI applications. It handles the storage, indexing, and retrieval of high-dimensional vector embeddings — the numerical representations that modern AI models use to encode semantic meaning. Unlike general-purpose databases adapted for vector workloads (such as PostgreSQL with pgvector or Elasticsearch), Pinecone was designed from the ground up for this use case, which shows in its performance characteristics at scale.

At its core, Pinecone solves the nearest-neighbor search problem: given a query vector, find the most semantically similar vectors in a dataset that may contain hundreds of millions of entries. This is the foundational operation behind retrieval-augmented generation (RAG), semantic search, recommendation engines, and AI agent memory systems. Applications query Pinecone by passing in a vector, and Pinecone returns the top-k most similar results — typically in milliseconds, even at scale.

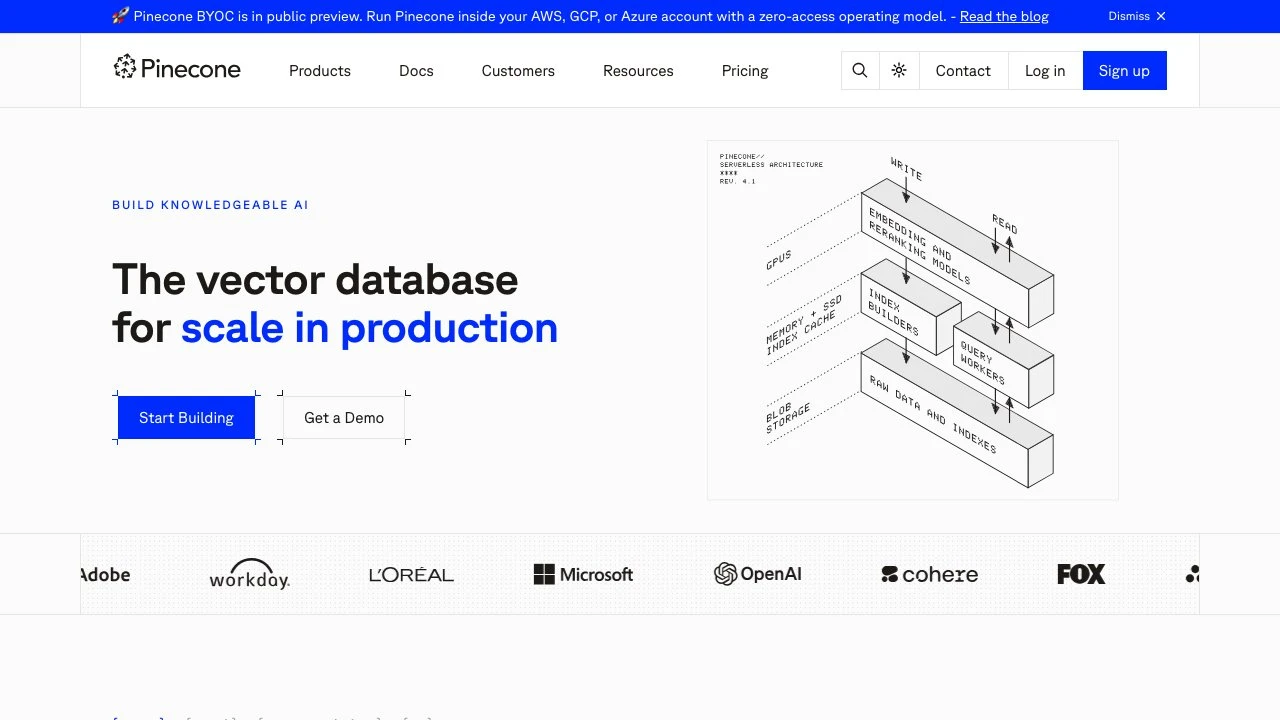

The architecture is fully serverless and managed. Developers create an index, upsert vectors with optional metadata, and query via a simple SDK available in Python, JavaScript, and other languages. There is no infrastructure to provision or tune. Vectors are indexed in real time as they are upserted, which means fresh data is immediately queryable without batch reindexing cycles that plague some alternatives.

Pinecone supports both dense and sparse vector indexes. Dense indexes handle semantic similarity through embeddings from models like OpenAI or Cohere. Sparse indexes enable exact keyword matching. Combining the two — hybrid search — gives applications the best of semantic understanding and keyword precision, which is particularly valuable for enterprise search scenarios where users expect both types of relevance.

For multi-tenant applications, Pinecone supports namespaces, which partition data within a single index. This allows a SaaS product to store one index with strict tenant isolation rather than managing hundreds of separate indexes. Metadata filtering further narrows query results to vectors matching specific attributes before similarity ranking occurs.

Pinecone also offers a reranking layer that applies a second-pass precision boost to retrieved candidates — a pattern increasingly used in production RAG pipelines to improve answer quality beyond what embedding similarity alone can provide.

On the enterprise side, Pinecone is SOC 2, GDPR, ISO 27001, and HIPAA certified. A BYOC (Bring Your Own Cloud) deployment option is in public preview, allowing organizations to run Pinecone inside their own AWS, GCP, or Azure accounts under a zero-access operating model — important for teams with strict data residency requirements.

Compared to alternatives like Weaviate, Qdrant, or Milvus, Pinecone trades self-hosting flexibility for operational simplicity. It is the right choice when teams want a production-grade vector database without managing infrastructure. Weaviate and Qdrant offer open-source self-hosted options that appeal to teams needing full control or lower costs at high volumes. pgvector suits teams already on PostgreSQL who need basic vector search without adding a separate service.

Key Features

- Serverless managed infrastructure with automatic scaling and no provisioning required

- Real-time vector indexing — upserted vectors are queryable immediately without batch reindexing

- Hybrid search combining dense semantic embeddings and sparse keyword indexes

- Metadata filtering to narrow similarity search to vectors matching specific attribute conditions

- Namespaces for multi-tenant data isolation within a single index

- Reranking support for a second-pass precision layer on top of initial retrieval results

- Hosted embedding models plus support for bringing your own vectors from any model

- BYOC deployment for running Pinecone inside your own AWS, GCP, or Azure account

Pros & Cons

Pros

- Fully managed with no infrastructure to operate, maintain, or tune

- Strong performance at scale with low-latency queries across large vector datasets

- Hybrid search (dense + sparse) handles both semantic and keyword retrieval in one system

- Enterprise compliance certifications (SOC 2, GDPR, ISO 27001, HIPAA) out of the box

- Real-time indexing means no delays between data ingestion and query availability

Cons

- No self-hosted open-source option — teams requiring full data control must use BYOC (preview)

- Serverless pricing can become expensive at very high query or storage volumes compared to self-managed alternatives

- Vendor lock-in is a consideration since Pinecone is a proprietary managed service

- Fewer configuration knobs than open-source alternatives like Qdrant or Milvus for teams wanting fine-grained index tuning

Pricing

Pinecone offers a free tier to create a first index at no cost. Beyond that, pricing is pay-as-you-go based on usage. Visit the official website for current pricing details.

Who Is This For?

Pinecone is best suited for engineering teams building production AI applications — including RAG pipelines, semantic search, conversational AI agents, and recommendation systems — who want a reliable, scalable vector database without managing infrastructure. It is particularly well-suited for enterprise teams with compliance requirements (HIPAA, SOC 2, GDPR) and multi-tenant SaaS products that need namespace-based tenant isolation at scale.