Weights & Biases

Experiment tracking, model versioning, and ML ops. Track agent training and evaluation runs.

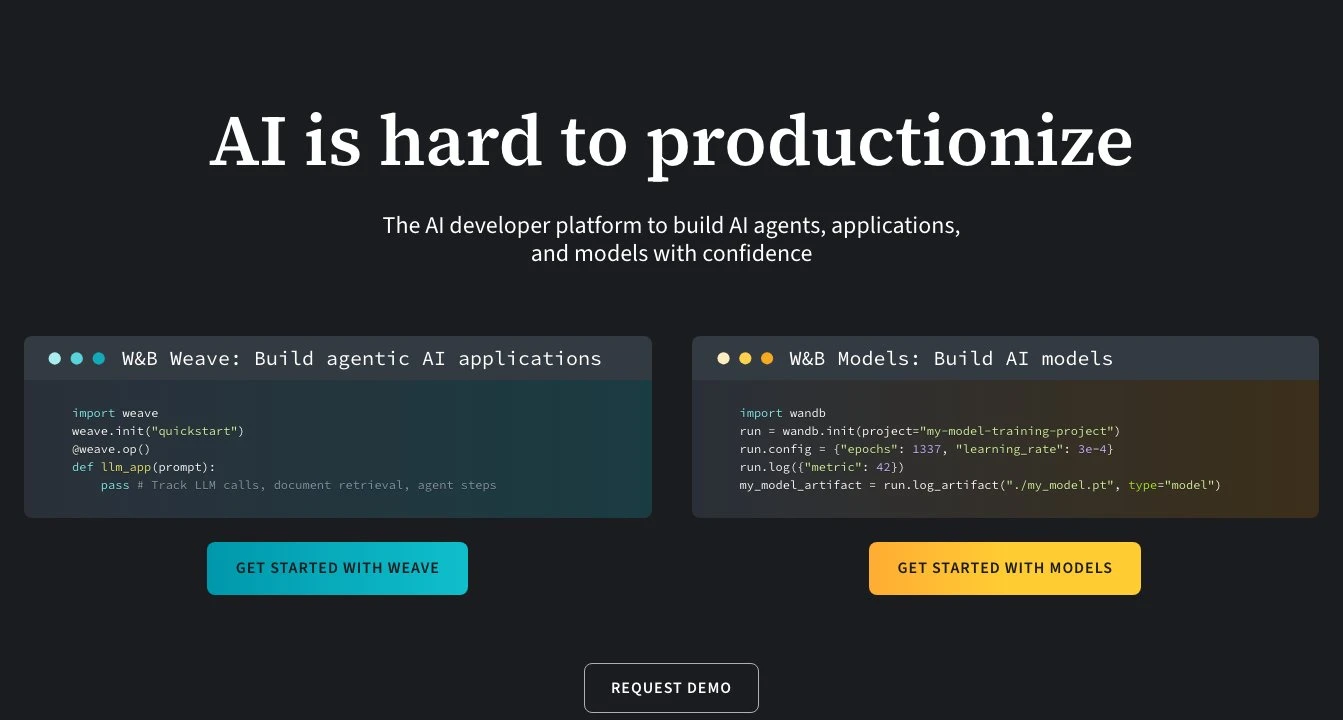

Weights & Biases (W&B) is an AI developer platform designed to help machine learning practitioners track, visualize, and manage their model training and evaluation workflows. Originally built around experiment tracking, W&B has grown into a full MLOps suite that covers the entire ML lifecycle — from initial experimentation through model versioning, dataset management, and production monitoring.

At its core, W&B provides a centralized dashboard where teams can log metrics, hyperparameters, model weights, and artifacts from any training run. A lightweight Python SDK instruments existing code with minimal changes: adding a few lines to a training script is enough to start capturing loss curves, GPU utilization, sample outputs, and custom visualizations in real time. This makes it practical to adopt incrementally rather than requiring a full infrastructure overhaul.

For teams working on LLM applications and AI agents, W&B offers Weave — a tracing and evaluation toolkit specifically designed for generative AI workflows. Weave logs the inputs, outputs, and intermediate steps of agent runs, making it possible to debug chain-of-thought behavior, compare prompt versions, and run structured evaluations against test datasets. This positions W&B alongside tools like LangSmith and Arize Phoenix in the LLM observability space, though W&B's strength is its deeper integration with the broader training and fine-tuning workflow rather than pure production monitoring.

Model versioning is handled through W&B Artifacts, a content-addressed storage system that tracks datasets, model checkpoints, and other files as versioned objects linked to the runs that produced them. This creates an auditable lineage graph showing exactly which data and code produced a given model — useful for reproducibility and compliance.

W&B sits in a competitive landscape that includes MLflow (open-source, self-hostable), Neptune.ai, Comet ML, and cloud-native options like SageMaker Experiments. Compared to MLflow, W&B offers a more polished hosted experience and richer visualization out of the box, but requires sending data to W&B's servers unless using the self-hosted enterprise option. For teams already invested in a cloud provider's ecosystem, native tools may be more convenient, but W&B's framework-agnostic SDK (supporting PyTorch, TensorFlow, JAX, Hugging Face, and more) makes it a strong choice for teams working across multiple frameworks.

The platform is widely used in academic research, AI startups, and enterprise ML teams. Its free tier is generous enough for individual researchers and small teams, while enterprise plans add SSO, private cloud deployment, and advanced access controls.

Key Features

- Experiment tracking with real-time metric logging, hyperparameter capture, and interactive dashboards

- Weave tracing for LLM and agent workflows — logs prompts, completions, tool calls, and intermediate steps

- W&B Artifacts for versioned dataset and model checkpoint storage with full lineage tracking

- Hyperparameter sweep automation (Bayesian, grid, and random search strategies)

- Collaborative reports for sharing interactive visualizations and analysis with teammates

- Model registry for managing model versions across the lifecycle from experimentation to production

- Integrations with major ML frameworks: PyTorch, TensorFlow, JAX, Hugging Face, LightGBM, and more

- Evaluation pipelines for structured LLM and agent benchmarking against test datasets

Pros & Cons

Pros

- Minimal instrumentation overhead — a few SDK calls integrate with existing training scripts

- Rich visualization layer makes it easy to compare runs and spot regressions quickly

- Weave provides purpose-built tracing for LLM/agent workflows, not just traditional ML

- Generous free tier suitable for individual researchers and academic use

- Strong ecosystem integrations across virtually all major ML frameworks

Cons

- Data is sent to W&B's hosted infrastructure by default; self-hosted deployment requires an enterprise contract

- Can feel heavyweight for simple projects where a local MLflow instance would suffice

- Weave/LLM tooling is newer and less mature than the core experiment tracking features

- Costs can scale quickly for large teams with high artifact storage needs

Pricing

Visit the official website for current pricing details.

Who Is This For?

Weights & Biases is best suited for ML engineers and research teams who run frequent training experiments and need a reliable way to compare results, reproduce runs, and manage model artifacts across projects. It is particularly strong for teams building or fine-tuning LLMs and AI agents who need both classic experiment tracking and modern LLM observability in a single platform.