LangGraph

Graph-based orchestration for LLM applications. Cyclical computation, built-in persistence.

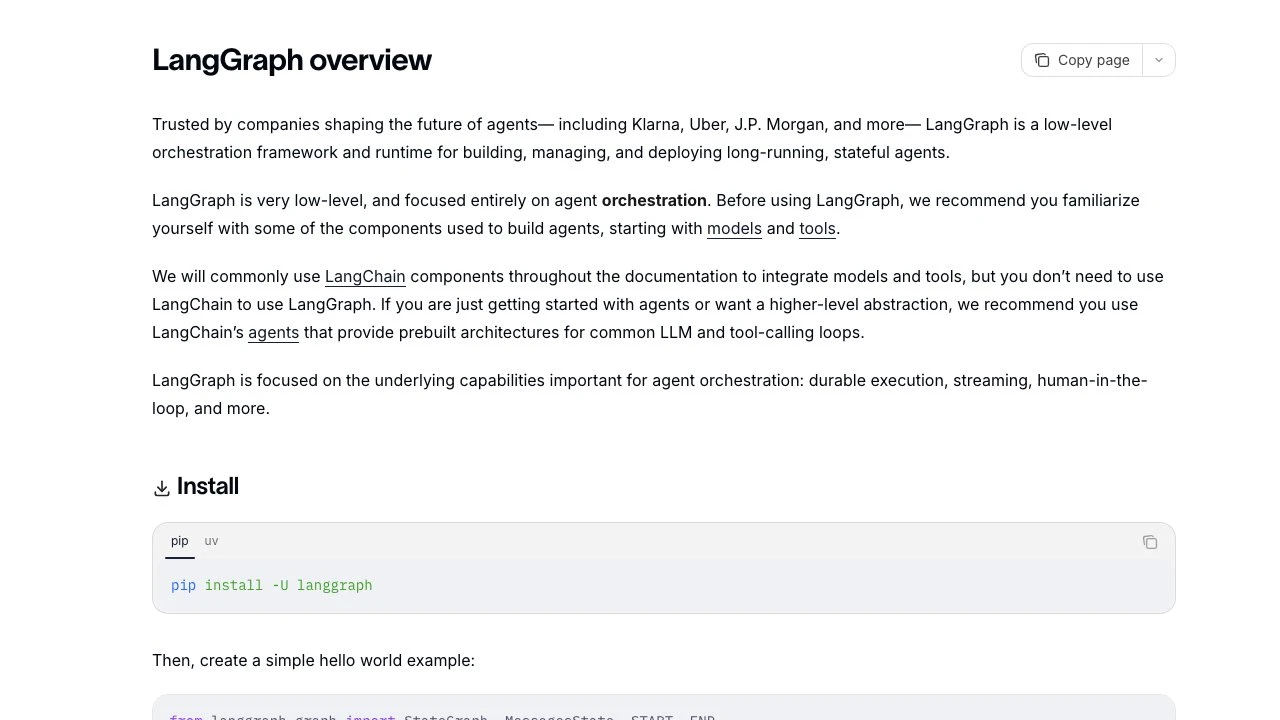

LangGraph is a low-level orchestration framework and runtime for building, managing, and deploying long-running, stateful agents powered by large language models. Developed by LangChain, it is trusted in production by companies including Klarna, Uber, and J.P. Morgan.

At its core, LangGraph models agent logic as a directed graph where nodes represent computation steps and edges represent transitions between them. Unlike higher-level frameworks that abstract away architecture decisions, LangGraph operates at the infrastructure layer — it gives developers explicit control over state management, execution flow, and agent behavior without imposing opinionated patterns on prompts or architecture.

The framework's defining feature is its support for cyclical computation, which allows agents to loop, branch, and revisit earlier states based on LLM output or external conditions. This stands in contrast to simple DAG-based pipelines (such as basic LangChain chains or LlamaIndex workflows) that can only move forward through a fixed sequence of steps. Cyclical graphs are essential for building agents that reason iteratively — retrying failed actions, checking intermediate results, or asking clarifying questions before proceeding.

State persistence is built into LangGraph's execution model rather than bolted on. Every graph execution maintains a checkpoint of its state, meaning agents can survive failures, resume from the last successful step, and maintain context across long-running tasks or multiple sessions. This durable execution model is what separates LangGraph from simpler tool-calling loops and makes it viable for production workloads that may run for minutes or hours.

Human-in-the-loop capabilities are a first-class feature. Developers can insert interrupt points anywhere in the graph, allowing a human to inspect the current agent state, modify it, and decide whether execution should continue, branch, or abort. This makes LangGraph well-suited for high-stakes workflows where autonomous operation needs human oversight checkpoints.

LangGraph integrates naturally with the broader LangChain ecosystem — including LangSmith for tracing, debugging, and evaluation — but it does not require LangChain components. Any Python-compatible LLM client or tool library can be wired into a LangGraph graph.

Compared to alternatives like CrewAI or AutoGen, LangGraph offers more granular control at the cost of a steeper learning curve. CrewAI and AutoGen provide higher-level abstractions for multi-agent collaboration with less boilerplate; LangGraph requires developers to explicitly define graph topology and state schemas. For teams that need predictable, debuggable agent behavior in production, that explicitness is often the point.

LangGraph also supports subgraphs, allowing complex agent systems to be composed from modular, reusable graph components. Streaming is built in, enabling real-time output from long-running agents. The framework ships with a local development server for rapid iteration and connects to LangSmith Studio for visual graph inspection.

Key Features

- Cyclical graph execution: Model agent logic as graphs with cycles, enabling iterative reasoning, retries, and conditional branching

- Built-in state persistence: Automatic checkpointing at every step — agents resume from the last known state after failures or interruptions

- Human-in-the-loop interrupts: Insert pause points anywhere in the graph to inspect, modify, or approve agent state before continuing

- Durable execution: Agents can run for extended periods without losing context, suitable for long-horizon tasks

- Streaming support: Real-time output streaming from any node in the graph during execution

- Subgraph composition: Build complex multi-agent systems by composing modular, reusable graph components

- LangSmith integration: Deep observability through execution traces, state transition visualization, and runtime metrics

- Framework-agnostic: Works with any Python LLM client — LangChain components are optional, not required

Pros & Cons

Pros

- Explicit control over agent state and execution flow without hidden abstractions

- Production-grade persistence and durable execution built into the core runtime

- Human-in-the-loop support is first-class, not an afterthought

- Works with any LLM provider or Python tooling, not locked into LangChain

- Active ecosystem with LangSmith for debugging and observability

Cons

- Steeper learning curve than higher-level agent frameworks like CrewAI or AutoGen

- Requires developers to define graph topology and state schemas explicitly — more boilerplate for simple use cases

- Low-level design means common patterns (ReAct loops, tool-calling agents) must be assembled manually rather than imported as prebuilt templates

- Observability and deployment features are tightly coupled to LangSmith, which is a commercial product

Pricing

LangGraph the open-source library is free to use under an MIT-style license. LangSmith, the companion observability and deployment platform, is a commercial product with separate pricing. Visit the official website for current pricing details.

Who Is This For?

LangGraph is best suited for engineering teams building production agent systems that require fine-grained control over execution flow, reliable state management, and human oversight checkpoints. It excels at long-running, multi-step workflows — such as automated research pipelines, document processing agents, or complex customer support bots — where predictability and debuggability matter more than development speed. Teams already invested in the LangChain ecosystem will find the tightest integration, but any Python shop comfortable with graph-based thinking can adopt it independently.