Letta

Framework for building agents with persistent memory. Formerly MemGPT. Unlimited context via memory management.

Letta (formerly MemGPT) is an open-source framework for building stateful LLM agents with persistent, long-term memory. Where most agent frameworks treat each session as stateless — discarding all context when the conversation ends — Letta agents retain memory across sessions, allowing them to accumulate knowledge, refine their behavior, and build a continuous model of the user and their work over time.

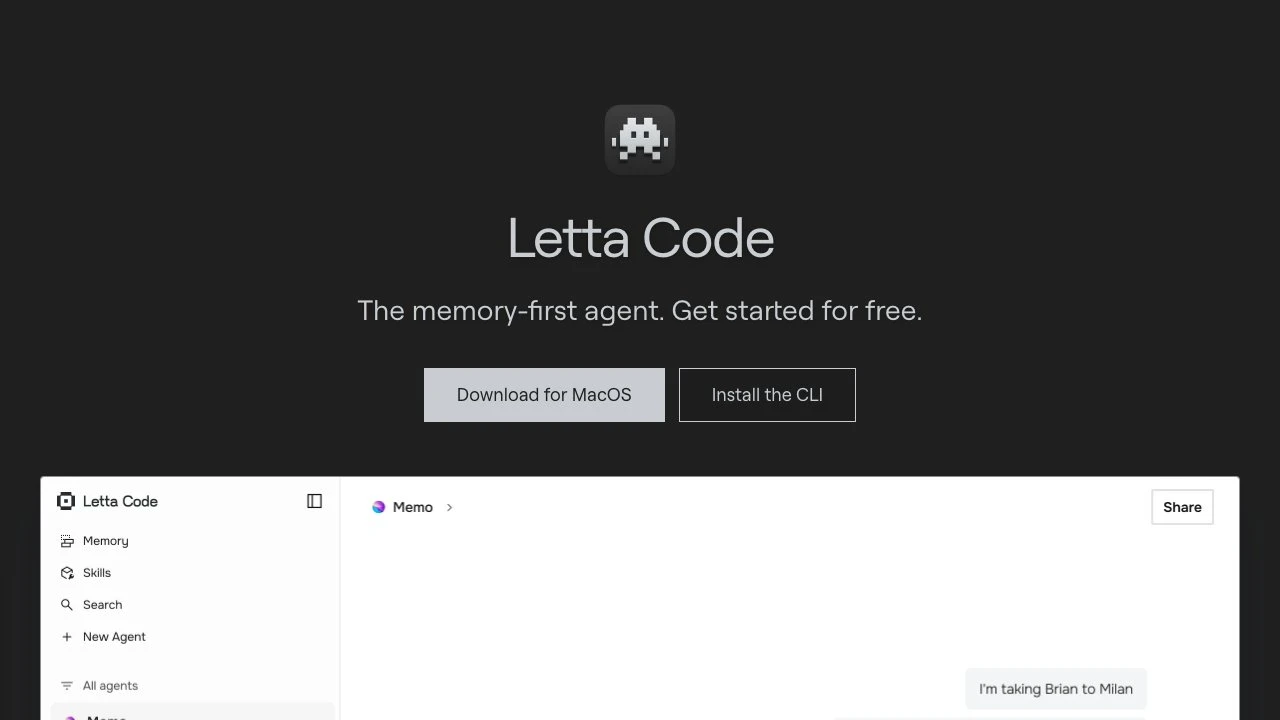

The core product is Letta Code, a memory-first coding agent available as a desktop app (MacOS) and CLI tool. Agents built on Letta don't just remember past conversations; they actively manage their own memory through background subagents that improve prompts, context, and skills with experience. Users can inspect and edit their agent's memory directly through a "memory palace" interface, giving full transparency into what the agent knows and how it thinks.

Unlike frameworks such as LangChain or LlamaIndex, which focus primarily on retrieval pipelines and chain composition, Letta's differentiator is the agent runtime itself — specifically the memory management layer. Context windows are effectively unlimited because the framework intelligently manages what gets stored, summarized, or surfaced at any given moment. This makes Letta particularly well-suited for long-horizon tasks where continuity matters: personal assistants, coding agents that understand a project's history, or customer-facing agents that need to remember individual users.

Memory portability is a notable design choice. Users can move agent memory — including conversation histories and context — between different model providers. This reduces lock-in and gives developers and end users flexibility to swap underlying models (e.g., from OpenAI to Anthropic) without losing accumulated agent state.

Letta Code supports bring-your-own API keys and existing coding plans, making it accessible without a separate subscription. The letta server command enables remote control of agents running on external machines, and memory, context, and files can be migrated across devices. The CLI is available via npm (Node.js 18+ required), and an SDK is available for developers who want to build custom applications on top of the Letta runtime.

In the broader agent ecosystem, Letta occupies a distinct niche. Tools like Cursor or GitHub Copilot are IDE-integrated coding assistants without persistent memory. OpenAI's GPT-based assistants support some memory, but it is opaque and not portable. Letta's approach — transparent, editable, portable memory — appeals to developers who want more control over agent state and behavior over time.

The project is open source (Python-based), with an active community on Discord and continued research backing from the original MemGPT team. It is a practical choice for developers building production agents where statefulness, continuity, and user-specific personalization are first-class requirements.

Key Features

- Persistent agents that retain memory across sessions, building on prior context rather than starting fresh

- Background memory subagents that automatically improve prompts, context, and skills over time

- Memory palace UI for transparent inspection and manual editing of agent memory

- Memory portability — move conversation history and context between different LLM providers

- Remote agent control via

letta server, enabling agents to run on external machines and be accessed from any device - Bring-your-own API keys and coding plan support with no forced subscription

- Available as a desktop app (MacOS), CLI (npm), and SDK for custom development

- Open-source Python framework, formerly known as MemGPT

Pros & Cons

Pros

- Genuine long-term memory that persists across sessions, not just within a context window

- Full transparency into agent memory — users can view and edit what the agent knows

- Model-agnostic with memory portability across providers, reducing vendor lock-in

- Active open-source community with research-backed development

- Flexible deployment: desktop app, CLI, SDK, and remote server modes

Cons

- Relatively early-stage product; desktop app (MacOS) was not yet released at time of writing

- Memory management adds architectural complexity compared to simpler stateless agent frameworks

- CLI requires Node.js 18+ which adds a runtime dependency for a Python-native project

- Smaller ecosystem of integrations and plugins compared to more established frameworks like LangChain

Pricing

Letta Code is free to get started using your own API keys or existing coding plans. A managed service option is available through Letta's platform. Visit the official website for current pricing details.

Who Is This For?

Letta is best suited for developers and teams building agents where continuity and personalization matter — coding assistants, personal productivity agents, or customer-facing bots that need to remember individual users across many sessions. It is particularly valuable for projects where transparent, portable, and editable agent memory is a requirement, and for teams that want to avoid vendor lock-in by keeping model choice flexible.