Replicate

Run open-source ML models in the cloud. Easy deployment of custom AI agent components.

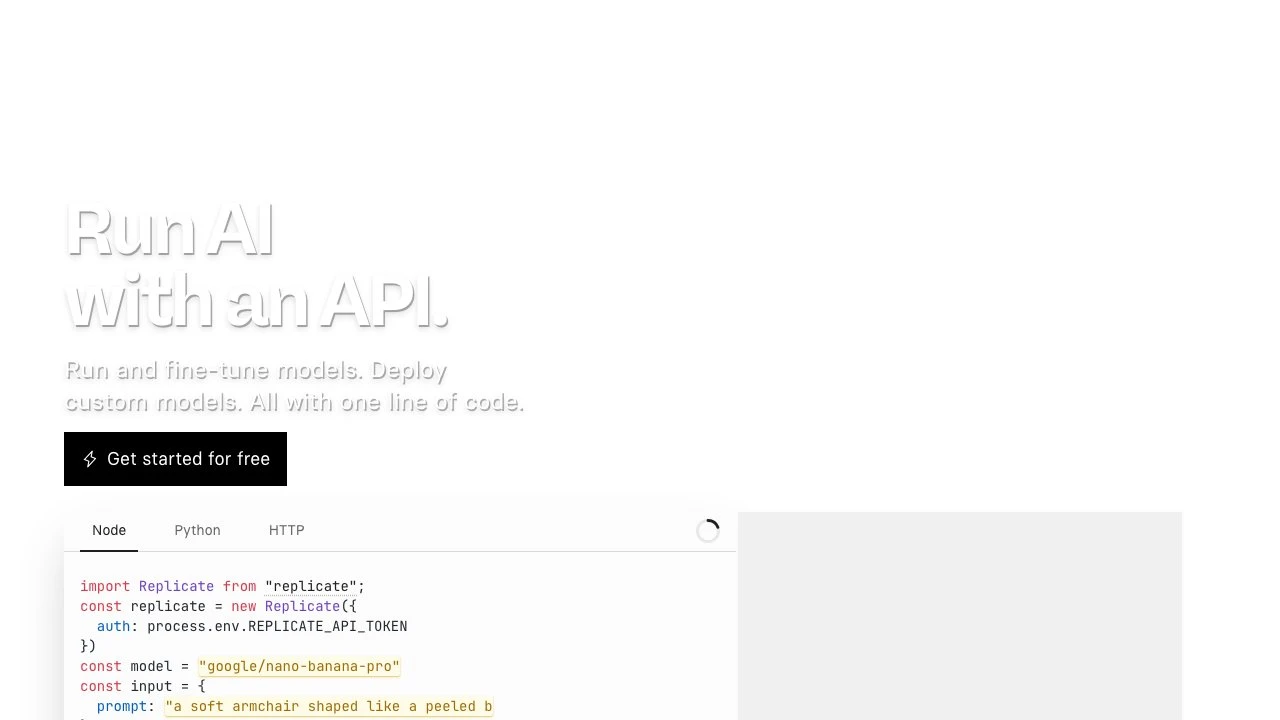

Replicate is a cloud platform that allows developers to run, fine-tune, and deploy machine learning models through a simple API. Rather than managing GPU infrastructure, model dependencies, or scaling concerns, developers interact with a unified interface that abstracts away the complexity of ML deployment. Replicate has recently joined Cloudflare, signaling deeper integration with edge infrastructure.

At its core, Replicate hosts thousands of open-source models across categories including image generation, video generation, speech synthesis, music generation, large language models, and image restoration. Models from prominent research labs and organizations — including Black Forest Labs (FLUX series), Google, ByteDance, OpenAI, and many others — are available with run counts in the millions, indicating production-scale usage.

The developer experience centers on a consistent API surface. A model can be invoked from Node.js, Python, or raw HTTP with just a few lines of code. The Node.js client, for example, requires only importing the replicate package, authenticating with an API token, specifying a model identifier, and passing an input object. This uniformity means switching between entirely different model types — say, from image generation to speech — requires minimal code changes.

Beyond running hosted models, Replicate supports fine-tuning, allowing teams to adapt base models to domain-specific data. Custom models can also be deployed on the platform, making it practical for organizations that have trained proprietary models but want managed inference infrastructure rather than building their own serving layer.

In the broader ecosystem, Replicate occupies a distinct position between raw cloud GPU providers (like Lambda Labs or RunPod) and higher-level AI application platforms. Compared to running models on raw GPU instances, Replicate trades some configurability for dramatically faster setup — no driver management, no containerization work, no autoscaling configuration. Compared to closed API providers like OpenAI or Stability AI's hosted endpoints, Replicate offers access to a much wider catalog of open-source models, including community-contributed ones, while supporting custom model deployment.

For AI agent builders, Replicate functions well as a component provider: the API can supply image generation, vision, or audio capabilities to an agent pipeline without the agent infrastructure team needing to operate any ML serving infrastructure themselves.

The platform also includes a Playground for comparing models interactively, which is useful during model selection before committing to API integration. Enterprise offerings are available for organizations with higher compliance, support, or volume requirements.

Key Features

- API access to thousands of open-source ML models across image, video, audio, LLM, and vision categories

- Fine-tuning support to adapt existing models to custom datasets

- Custom model deployment for teams with proprietary trained models

- Consistent SDK interface across Node.js, Python, and HTTP for all models

- Interactive Playground for comparing models before API integration

- Models from major providers including Black Forest Labs, Google, ByteDance, and OpenAI

- Enterprise tier for organizations with advanced compliance and support needs

- Pay-per-run pricing model that eliminates idle infrastructure costs

Pros & Cons

Pros

- Eliminates GPU infrastructure management — no drivers, containers, or autoscaling to configure

- Extremely broad model catalog covering virtually every ML modality

- Consistent API surface makes switching between model types straightforward

- Community-contributed models alongside flagship research lab models

- Fine-tuning and custom model hosting in one platform

Cons

- Less control over underlying infrastructure compared to raw GPU cloud providers

- Costs can scale quickly at high inference volumes compared to self-hosted solutions

- Dependent on Replicate's model availability and uptime for production workloads

- Some cutting-edge proprietary models may not be available on the platform

Pricing

Replicate uses a pay-per-run pricing model billed by compute time, with rates varying by model and hardware tier. Visit the official website for current pricing details.

Who Is This For?

Replicate is best suited for developers and engineering teams who want to integrate ML model inference into applications or agent pipelines without operating their own GPU infrastructure. It is particularly well-matched for projects that require access to a wide range of open-source models — especially image, video, or audio generation — or for teams that need to deploy fine-tuned custom models without building a dedicated serving layer.