Whisper

OpenAI's open-source speech recognition model. Multilingual transcription for voice agent pipelines.

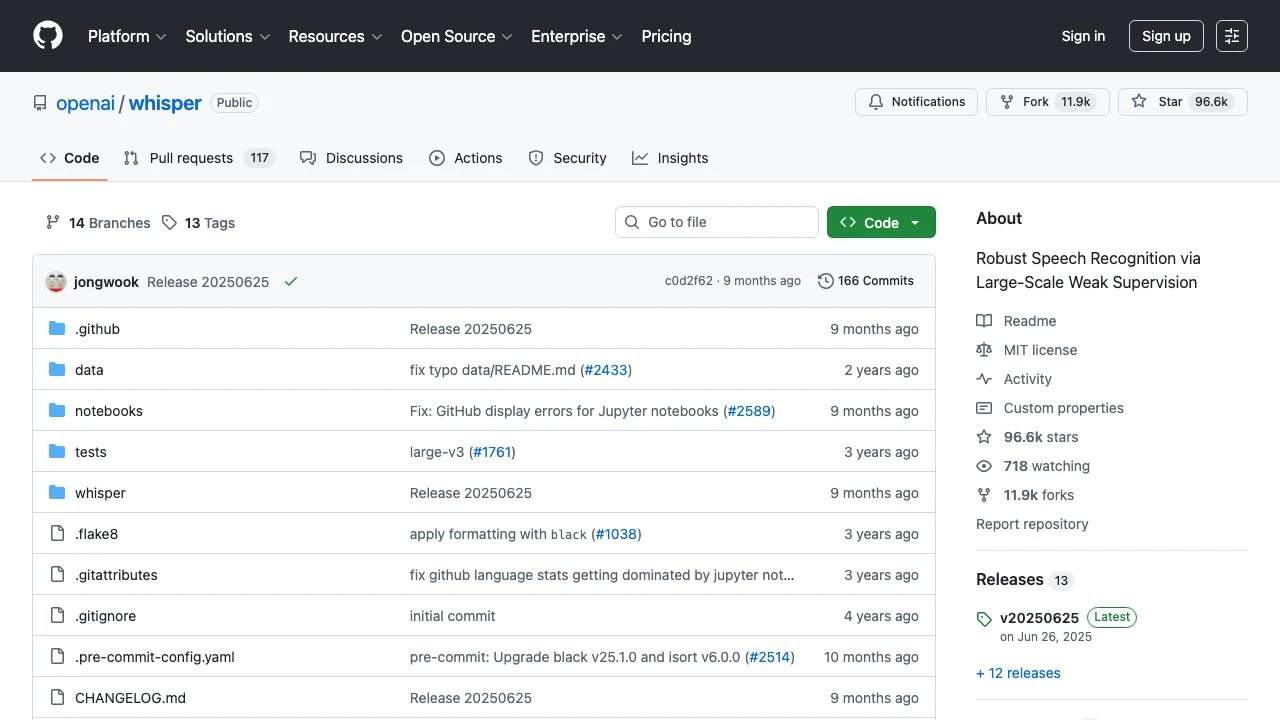

Whisper is an open-source automatic speech recognition (ASR) system developed by OpenAI. Released publicly on GitHub, it is trained on a large dataset of diverse audio and is capable of performing multilingual transcription, translation, and language identification. With over 96,000 GitHub stars, it has become one of the most widely adopted speech recognition libraries in the developer community.

At its core, Whisper uses a transformer-based encoder-decoder architecture. Audio is split into 30-second chunks, converted into log-Mel spectrograms, and passed through an encoder. A decoder then predicts transcription tokens, conditioned on the encoded audio representation. This design makes it robust across a wide range of accents, background noise levels, and recording conditions.

Whisper ships in multiple model sizes — tiny, base, small, medium, large, and large-v3 — allowing developers to trade off between speed and accuracy depending on their deployment constraints. The smallest models can run comfortably on consumer CPUs, while the large variants require a capable GPU for real-time performance.

The library supports transcription in nearly 100 languages and can translate non-English audio directly to English text. This makes it particularly useful for multilingual voice agent pipelines, subtitling workflows, and meeting transcription systems that need broad language coverage without managing separate per-language models.

Compared to commercial alternatives like Google Speech-to-Text, AWS Transcribe, or AssemblyAI, Whisper's primary advantage is that it runs entirely locally — there are no API costs, no data leaves the machine, and there are no rate limits. This makes it attractive for privacy-sensitive applications and for use cases with high transcription volume. The trade-off is that running large Whisper models requires meaningful compute resources, and real-time streaming is not natively supported by the base implementation (though community projects like faster-whisper and whisper-streaming address this).

For voice agent developers, Whisper fits naturally into pipelines where audio is captured from a user, transcribed to text, processed by an LLM, and then converted back to speech via a TTS system. Because it is self-hosted, it can be integrated at any layer of the stack without introducing external API dependencies or latency from network round-trips.

Whisper is released under the MIT License, making it suitable for both commercial and non-commercial projects. The Python package can be installed via pip and integrates with standard ML tooling including PyTorch. OpenAI has also made the model weights freely available, and the community has produced optimized variants such as faster-whisper (built on CTranslate2) for significantly improved inference speed.

For teams building production voice systems, Whisper is often used as the ASR component in a broader stack, paired with tools like LiveKit, Deepgram (as a hosted alternative), or Pipecat for real-time voice pipeline orchestration.

Key Features

- Supports transcription and translation across approximately 100 languages

- Multiple model sizes (tiny through large-v3) to balance speed and accuracy

- Runs fully locally — no API key, no data sent to external servers

- MIT License — free for commercial and non-commercial use

- Built on a transformer encoder-decoder architecture trained on large-scale diverse audio

- Language detection built into the inference pipeline

- Python package with pip install and PyTorch-based inference

- Community-supported optimized variants (e.g., faster-whisper) for production performance

Pros & Cons

Pros

- Completely free and open-source under the MIT License with no usage costs

- Strong multilingual support covering roughly 100 languages out of the box

- Runs locally, keeping audio data private and removing dependency on third-party APIs

- Large, active open-source community with optimized forks and extensive documentation

- Flexible model sizes make it deployable on hardware ranging from laptops to servers

Cons

- Native implementation does not support real-time streaming; requires workarounds for live transcription

- Large models demand significant GPU resources for acceptable inference speed

- No hosted service or managed API — all infrastructure must be self-managed

- Word-level timestamps require additional tooling or the

whisper-timestampedfork - Transcription latency can be high on CPU for larger model variants

Pricing

Whisper is fully free and open-source under the MIT License. There are no licensing fees, subscription tiers, or usage costs. Compute costs are borne by whoever runs the model.

Who Is This For?

Whisper is best suited for developers and engineers building voice agent pipelines, transcription services, or multilingual audio processing workflows who need a production-capable ASR model without ongoing API costs or data privacy concerns. It is particularly well-matched for teams with access to GPU infrastructure who need broad language coverage, local deployment, or high-volume transcription workloads where managed API pricing would be prohibitive.